As ChatGPT rises in popularity, changing the learning environment of higher education, Calvin professors are making adjustments to their classroom policies.

In November 2022, OpenAI, an artificial intelligence (AI) research laboratory, publically debuted ChatGPT, a chatbot which allows users to access information on a variety of topics by entering prompts. In subsequent months, the app surged to the top in both Apple and Google Play stores, making it the fastest-growing app of its time. GPT-3.5’s public availability has given OpenAI a plethora of data to train with, allowing them to improve subsequent models further.

Following ChatGPT’s sudden rise to popularity, other large tech companies introduced duplicate efforts, like Meta’s LLAMA and Google’s PaLM. OpenAI has since released GPT-4, an even larger model with more features. This feature is restricted, however, to those willing to pay for it. According to Keith Vander Linden, professor of computer science (CS),“some technologies come and go. This is a big one. It’s going to affect all information-processing tasks.”

The human-like quality of ChatGPT’s answers make it useful for students’ writing, and those in administration have been keen to react.

“There is no question that technologies such as ChatGPT will change the way we approach drafting text, but to me, the open question is how much they will change the nature of writing itself” said Kristine Johnson, Calvin University’s Rhetoric Director, in a public response to ChatGPT’s growing popularity.

Most ChatGPT responses, when copied word-for-word, can be easy to detect. Tools like ZeroGPT examine the statistical probability of two words being next to each other, and evaluate the likelihood of the text being written by ChatGPT based on how frequently certain words appear and where.

Turnitin, a work submission tool used by many courses at Calvin and elsewhere, has already incorporated this functionality.

Unlike some professors, who have prohibited the use of ChatGPT in the classroom, Vander Linden’s policy goes both ways. Vander Linden teaches an upper-level class focused on the semester-long development of a mobile app; for this class, he has encouraged the use of ChatGPT and other AI language models.

The rationale behind this, Vander Linden said, is that it would allow students to build larger, higher-quality apps. In this context, Vander Linden said that “the only concern I have is that none of us know how to use [ChatGPT] very well, yet.”

However, Vander Linden isn’t going to allow AI in all situations. “I’m going to retain my paper quizzes,” he said.

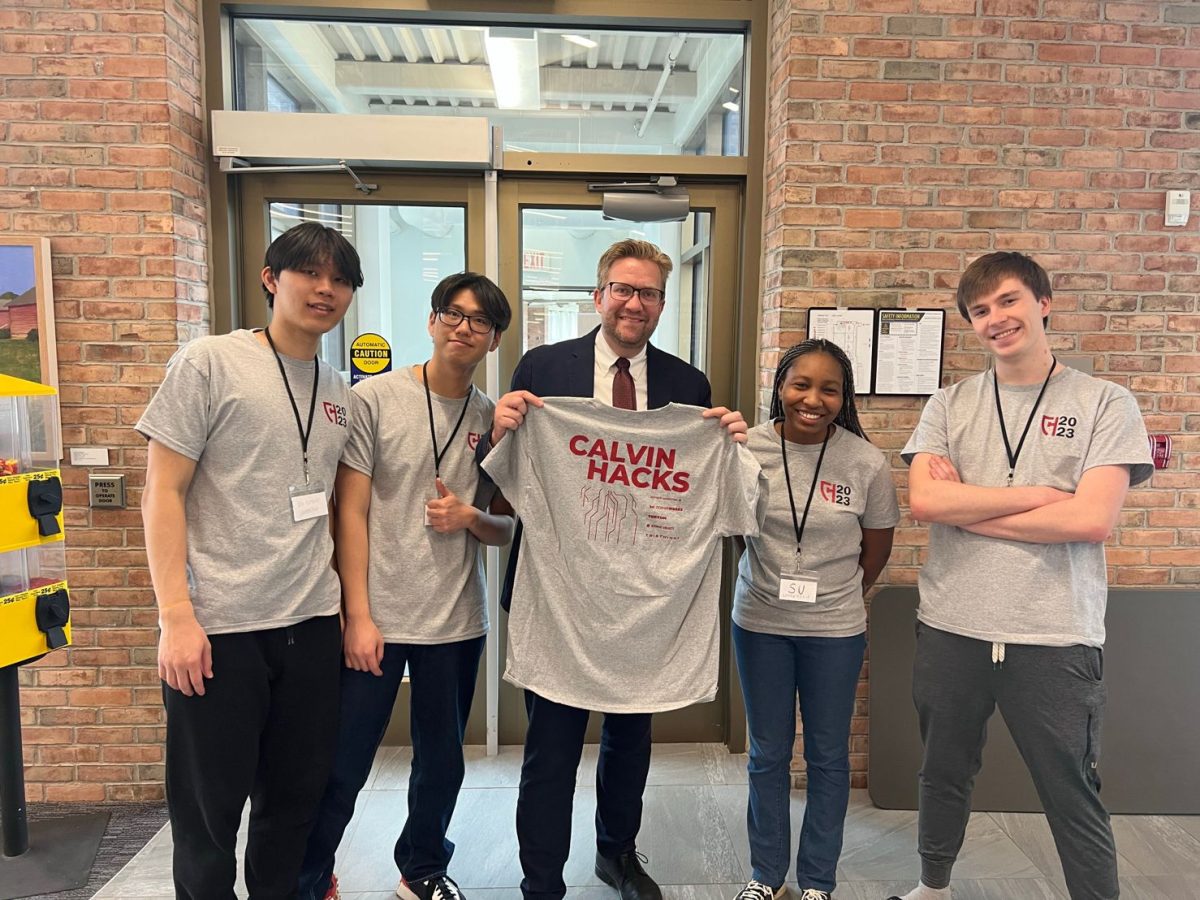

Bruce Abernethy, the director of software development at Magic-Wrighter, a Grand Rapids-based fintech software company and a member of Calvin’s CS department’s Strategic Partners Council, addressed concerns about cheating during a talk at Calvin CS seminar on AI.

Abernethy often uses AI to write software for his company. In a CS department seminar on AI, he noted the benefits of AI for speeding up development times, generating test input data, and allowing easy cross-platform integration, and in translating, explaining, and documenting existing code.

For students, using AI may or may not be cheating, depending on the situation, according to Abernethy. However, Abernethy also pointed out additional considerations for students using AI on assignments.

“If you’re skipping certain steps, you’re really cheating yourself out of learning those basic skills,” said Abernethy.

Vander Linden also warns of the dangers of using ChatGPT as a substitute for learning. “ChatGPT will give you answers, and if you don’t know the right answer deeply, you’re not going to be able to recognize it,” Vander Linden said.

The AI also sometimes gives inaccurate information. Due to the opaque nature of AI-based language models, it can be hard to diagnose exactly what went wrong when a faulty answer is given.

“It hallucinates,” Abernethy said in his seminar talk. “I can’t tell you how many times I’ve gotten code back that’s wrong, doesn’t work, [or] it’s from outdated frameworks.”

Despite the program posing some issues, Vander Linden doesn’t believe that ChatGPT will be the worst invention of all time. “It’s called presentism, to always believe that today’s problems are the worst ever in history; an order of magnitude worse than the last one. And it just isn’t,” said Vander Linden.